Monday, 14 March 2011

Thursday, 10 March 2011

Informatica related videos

it's really good stuff...

Informatica related videos provided by sunil Reddy

http://www.sendspace.com/file/vxh9fa

http://www.sendspace.com/file/fxzqxq

Informatica related videos provided by sunil Reddy

http://www.sendspace.com/file/vxh9fa

http://www.sendspace.com/file/fxzqxq

real time templates

Hi Guys,

Satha is provided very useful website where u can find the templates used in real time.

http://www.itap.purdue.edu/ea/standards

Satha is provided very useful website where u can find the templates used in real time.

http://www.itap.purdue.edu/ea/standards

Wednesday, 9 March 2011

the difference between union and joiner?

In earlier stage, i have lot of confusion........

finally i concluded,

Joiner select column from tables, where as union selects rows.

In Joiner, matching rows are joined side-by-side to make the

result table whereas in Union rows are joined one-below

the other to make the result table.

In informatica Union transformation is implemented with union all which mean if we do

union of two tables it will select all the rows i.e it will not select distinct rows.

finally i concluded,

Joiner select column from tables, where as union selects rows.

In Joiner, matching rows are joined side-by-side to make the

result table whereas in Union rows are joined one-below

the other to make the result table.

In informatica Union transformation is implemented with union all which mean if we do

union of two tables it will select all the rows i.e it will not select distinct rows.

Tuesday, 8 March 2011

Informatica Transformations

Informatica Transformations

A transformation is a repository object that generates, modifies, or passes data. The Designer provides a set of transformations that perform specific functions. For example, an Aggregator transformation performs calculations on groups of data.Transformations can be of two types:

Active Transformation

| An active transformation can change the number of rows that pass through the transformation, change the transaction boundary, can change the row type. For example, Filter, Transaction Control and Update Strategy are active transformations. |

The key point is to note that Designer does not allow you to connect multiple active transformations or an active and a passive transformation to the same downstream transformation or transformation input group because the Integration Service may not be able to concatenate the rows passed by active transformations However, Sequence Generator transformation(SGT) is an exception to this rule. A SGT does not receive data. It generates unique numeric values. As a result, the Integration Service does not encounter problems concatenating rows passed by a SGT and an active transformation.

Passive Transformation.A passive transformation does not change the number of rows that pass through it, maintains the transaction boundary, and maintains the row type.

The key point is to note that Designer allows you to connect multiple transformations to the same downstream transformation or transformation input group only if all transformations in the upstream branches are passive. The transformation that originates the branch can be active or passive.

Transformations can be Connected or UnConnected to the data flow.| Connected Transformation Connected transformation is connected to other transformations or directly to target table in the mapping. |

An unconnected transformation is not connected to other transformations in the mapping. It is called within another transformation, and returns a value to that transformation.

Informatica Transformations

Following are the list of Transformations available in Informatica:

|

Informatica Transformations

Aggregator TransformationAggregator transformation performs aggregate funtions like average, sum, count etc. on multiple rows or groups. The Integration Service performs these calculations as it reads and stores data group and row data in an aggregate cache. It is an Active & Connected transformation.

Difference b/w Aggregator and Expression Transformation? Expression transformation permits you to perform calculations row by row basis only. In Aggregator you can perform calculations on groups.

Aggregator transformation has following ports State, State_Count, Previous_State and State_Counter.

Components: Aggregate Cache, Aggregate Expression, Group by port, Sorted input.Aggregate Expressions: are allowed only in aggregate transformations. can include conditional clauses and non-aggregate functions. can also include one aggregate function nested into another aggregate function.

Aggregate Functions: AVG, COUNT, FIRST, LAST, MAX, MEDIAN, MIN, PERCENTILE, STDDEV, SUM, VARIANCE| Application Source Qualifier Transformation Represents the rows that the Integration Service reads from an application, such as an ERP source, when it runs a session.It is an Active & Connected transformation. |

It works with procedures you create outside the designer interface to extend PowerCenter functionality. calls a procedure from a shared library or DLL. It is active/passive & connected type.

You can use CT to create T. that require multiple input groups and multiple output groups.Custom transformation allows you to develop the transformation logic in a procedure. Some of the PowerCenter transformations are built using the Custom transformation. Rules that apply to Custom transformations, such as blocking rules, also apply to transformations built using Custom transformations. PowerCenter provides two sets of functions called generated and API functions. The Integration Service uses generated functions to interface with the procedure. When you create a Custom transformation and generate the source code files, the Designer includes the generated functions in the files. Use the API functions in the procedure code to develop the transformation logic.

Difference between Custom and External Procedure Transformation? In Custom T, input and output functions occur separately.The Integration Service passes the input data to the procedure using an input function. The output function is a separate function that you must enter in the procedure code to pass output data to the Integration Service. In contrast, in the External Procedure transformation, an external procedure function does both input and output, and its parameters consist of all the ports of the transformation.Data Masking Transformation

| Passive & Connected. It is used to change sensitive production data to realistic test data for non production environments. It creates masked data for development, testing, training and data mining. Data relationship and referential integrity are maintained in the masked data. |

For example: It returns masked value that has a realistic format for SSN, Credit card number, birthdate, phone number, etc. But is not a valid value. Masking types: Key Masking, Random Masking, Expression Masking, Special Mask format. Default is no masking.

Expression TransformationPassive & Connected. are used to perform non-aggregate functions, i.e to calculate values in a single row. Example: to calculate discount of each product or to concatenate first and last names or to convert date to a string field.

You can create an Expression transformation in the Transformation Developer or the Mapping Designer. Components: Transformation, Ports, Properties, Metadata Extensions.

External ProcedurePassive & Connected or Unconnected. It works with procedures you create outside of the Designer interface to extend PowerCenter functionality. You can create complex functions within a DLL or in the COM layer of windows and bind it to external procedure transformation. To get this kind of extensibility, use the Transformation Exchange (TX) dynamic invocation interface built into PowerCenter. You must be an experienced programmer to use TX and use multi-threaded code in external procedures.

Filter TransformationActive & Connected. It allows rows that meet the specified filter condition and removes the rows that do not meet the condition. For example, to find all the employees who are working in NewYork or to find out all the faculty member teaching Chemistry in a state. The input ports for the filter must come from a single transformation. You cannot concatenate ports from more than one transformation into the Filter transformation. Components: Transformation, Ports, Properties, Metadata Extensions.

HTTP Transformation

| Passive & Connected. It allows you to connect to an HTTP server to use its services and applications. With an HTTP transformation, the Integration Service connects to the HTTP server, and issues a request to retrieves data or posts data to the target or downstream transformation in the mapping. |

Authentication types: Basic, Digest and NTLM. Examples: GET, POST and SIMPLE POST.

Java TransformationActive or Passive & Connected. It provides a simple native programming interface to define transformation functionality with the Java programming language. You can use the Java transformation to quickly define simple or moderately complex transformation functionality without advanced knowledge of the Java programming language or an external Java development environment.

Joiner TransformationActive & Connected. It is used to join data from two related heterogeneous sources residing in different locations or to join data from the same source. In order to join two sources, there must be at least one or more pairs of matching column between the sources and a must to specify one source as master and the other as detail. For example: to join a flat file and a relational source or to join two flat files or to join a relational source and a XML source.

The Joiner transformation supports the following types of joins:

The Joiner transformation supports the following types of joins:

- Normal

Normal join discards all the rows of data from the master and detail source that do not match, based on the condition.

- Master Outer

Master outer join discards all the unmatched rows from the master source and keeps all the rows from the detail source and the matching rows from the master source.

- Detail Outer

Detail outer join keeps all rows of data from the master source and the matching rows from the detail source. It discards the unmatched rows from the detail source.

- Full Outer

Full outer join keeps all rows of data from both the master and detail sources.

Limitations on the pipelines you connect to the Joiner transformation:

*You cannot use a Joiner transformation when either input pipeline contains an Update Strategy transformation.

*You cannot use a Joiner transformation if you connect a Sequence Generator transformation directly before the Joiner transformation.

Lookup Transformation*You cannot use a Joiner transformation when either input pipeline contains an Update Strategy transformation.

*You cannot use a Joiner transformation if you connect a Sequence Generator transformation directly before the Joiner transformation.

Passive & Connected or UnConnected. It is used to look up data in a flat file, relational table, view, or synonym. It compares lookup transformation ports (input ports) to the source column values based on the lookup condition. Later returned values can be passed to other transformations. You can create a lookup definition from a source qualifier and can also use multiple Lookup transformations in a mapping.

You can perform the following tasks with a Lookup transformation:*Get a related value. Retrieve a value from the lookup table based on a value in the source. For example, the source has an employee ID. Retrieve the employee name from the lookup table.

*Perform a calculation. Retrieve a value from a lookup table and use it in a calculation. For example, retrieve a sales tax percentage, calculate a tax, and return the tax to a target.

*Update slowly changing dimension tables. Determine whether rows exist in a target.

Lookup Components: Lookup source, Ports, Properties, Condition.

Types of Lookup:

1) Relational or flat file lookup.

2) Pipeline lookup.

3) Cached or uncached lookup.

4) connected or unconnected lookup.

Normalizer Transformation

Active & Connected. The Normalizer transformation processes multiple-occurring columns or multiple-occurring groups of columns in each source row and returns a row for each instance of the multiple-occurring data. It is used mainly with COBOL sources where most of the time data is stored in de-normalized format.

You can create following Normalizer transformation:*VSAM Normalizer transformation. A non-reusable transformation that is a Source Qualifier transformation for a COBOL source. VSAM stands for Virtual Storage Access Method, a file access method for IBM mainframe.

*Pipeline Normalizer transformation. A transformation that processes multiple-occurring data from relational tables or flat files. This is default when you create a normalizer transformation.

Components: Transformation, Ports, Properties, Normalizer, Metadata Extensions.

Rank TransformationActive & Connected. It is used to select the top or bottom rank of data. You can use it to return the largest or smallest numeric value in a port or group or to return the strings at the top or the bottom of a session sort order. For example, to select top 10 Regions where the sales volume was very high or to select 10 lowest priced products. As an active transformation, it might change the number of rows passed through it. Like if you pass 100 rows to the Rank transformation, but select to rank only the top 10 rows, passing from the Rank transformation to another transformation. You can connect ports from only one transformation to the Rank transformation. You can also create local variables and write non-aggregate expressions.

Router Transformation

| Active & Connected. It is similar to filter transformation because both allow you to apply a condition to test data. The only difference is, filter transformation drops the data that do not meet the condition whereas router has an option to capture the data that do not meet the condition and route it to a default output group. If you need to test the same input data based on multiple conditions, use a Router transformation in a mapping instead of creating multiple Filter transformations to perform the same task. The Router transformation is more efficient. |

Passive & Connected transformation. It is used to create unique primary key values or cycle through a sequential range of numbers or to replace missing primary keys.

It has two output ports: NEXTVAL and CURRVAL. You cannot edit or delete these ports. Likewise, you cannot add ports to the transformation. NEXTVAL port generates a sequence of numbers by connecting it to a transformation or target. CURRVAL is the NEXTVAL value plus one or NEXTVAL plus the Increment By value. You can make a Sequence Generator reusable, and use it in multiple mappings. You might reuse a Sequence Generator when you perform multiple loads to a single target.

For non-reusable Sequence Generator transformations, Number of Cached Values is set to zero by default, and the Integration Service does not cache values during the session.For non-reusable Sequence Generator transformations, setting Number of Cached Values greater than zero can increase the number of times the Integration Service accesses the repository during the session. It also causes sections of skipped values since unused cached values are discarded at the end of each session.

For reusable Sequence Generator transformations, you can reduce Number of Cached Values to minimize discarded values, however it must be greater than one. When you reduce the Number of Cached Values, you might increase the number of times the Integration Service accesses the repository to cache values during the session.Sorter Transformation

| Active & Connected transformation. It is used sort data either in ascending or descending order according to a specified sort key. You can also configure the Sorter transformation for case-sensitive sorting, and specify whether the output rows should be distinct. When you create a Sorter transformation in a mapping, you specify one or more ports as a sort key and configure each sort key port to sort in ascending or descending order. |

Active & Connected transformation. When adding a relational or a flat file source definition to a mapping, you need to connect it to a Source Qualifier transformation. The Source Qualifier is used to join data originating from the same source database, filter rows when the Integration Service reads source data, Specify an outer join rather than the default inner join and to specify sorted ports.

It is also used to select only distinct values from the source and to create a custom query to issue a special SELECT statement for the Integration Service to read source data

SQL TransformationIt is also used to select only distinct values from the source and to create a custom query to issue a special SELECT statement for the Integration Service to read source data

Active/Passive & Connected transformation. The SQL transformation processes SQL queries midstream in a pipeline. You can insert, delete, update, and retrieve rows from a database. You can pass the database connection information to the SQL transformation as input data at run time. The transformation processes external SQL scripts or SQL queries that you create in an SQL editor. The SQL transformation processes the query and returns rows and database errors.

Stored Procedure TransformationPassive & Connected or UnConnected transformation. It is useful to automate time-consuming tasks and it is also used in error handling, to drop and recreate indexes and to determine the space in database, a specialized calculation etc. The stored procedure must exist in the database before creating a Stored Procedure transformation, and the stored procedure can exist in a source, target, or any database with a valid connection to the Informatica Server. Stored Procedure is an executable script with SQL statements and control statements, user-defined variables and conditional statements.

Transaction Control TransformationActive & Connected. You can control commit and roll back of transactions based on a set of rows that pass through a Transaction Control transformation. Transaction control can be defined within a mapping or within a session.

Components: Transformation, Ports, Properties, Metadata Extensions.

Union TransformationComponents: Transformation, Ports, Properties, Metadata Extensions.

Active & Connected. The Union transformation is a multiple input group transformation that you use to merge data from multiple pipelines or pipeline branches into one pipeline branch. It merges data from multiple sources similar to the UNION ALL SQL statement to combine the results from two or more SQL statements. Similar to the UNION ALL statement, the Union transformation does not remove duplicate rows.

Rules

1) You can create multiple input groups, but only one output group.

2) All input groups and the output group must have matching ports. The precision, datatype, and scale must be identical across all groups.

3) The Union transformation does not remove duplicate rows. To remove duplicate rows, you must add another transformation such as a Router or Filter transformation.

4) You cannot use a Sequence Generator or Update Strategy transformation upstream from a Union transformation.

5) The Union transformation does not generate transactions.

Components: Transformation tab, Properties tab, Groups tab, Group Ports tab.

Unstructured Data TransformationRules

1) You can create multiple input groups, but only one output group.

2) All input groups and the output group must have matching ports. The precision, datatype, and scale must be identical across all groups.

3) The Union transformation does not remove duplicate rows. To remove duplicate rows, you must add another transformation such as a Router or Filter transformation.

4) You cannot use a Sequence Generator or Update Strategy transformation upstream from a Union transformation.

5) The Union transformation does not generate transactions.

Components: Transformation tab, Properties tab, Groups tab, Group Ports tab.

Active/Passive and connected. The Unstructured Data transformation is a transformation that processes unstructured and semi-structured file formats, such as messaging formats, HTML pages and PDF documents. It also transforms structured formats such as ACORD, HIPAA, HL7, EDI-X12, EDIFACT, AFP, and SWIFT.

Components: Transformation, Properties, UDT Settings, UDT Ports, Relational Hierarchy.

Update Strategy TransformationComponents: Transformation, Properties, UDT Settings, UDT Ports, Relational Hierarchy.

Active & Connected transformation. It is used to update data in target table, either to maintain history of data or recent changes. It flags rows for insert, update, delete or reject within a mapping.

XML Generator TransformationActive & Connected transformation. It lets you create XML inside a pipeline. The XML Generator transformation accepts data from multiple ports and writes XML through a single output port.

XML Parser TransformationActive & Connected transformation. The XML Parser transformation lets you extract XML data from messaging systems, such as TIBCO or MQ Series, and from other sources, such as files or databases. The XML Parser transformation functionality is similar to the XML source functionality, except it parses the XML in the pipeline.

XML Source Qualifier TransformationActive & Connected transformation. XML Source Qualifier is used only with an XML source definition. It represents the data elements that the Informatica Server reads when it executes a session with XML sources. has one input or output port for every column in the XML source.

External Procedure Transformation| Active & Connected/UnConnected transformation. Sometimes, the standard transformations such as Expression transformation may not provide the functionality that you want. In such cases External procedure is useful to develop complex functions within a dynamic link library (DLL) or UNIX shared library, instead of creating the necessary Expression transformations in a mapping. |

Active & Connected transformation. It operates in conjunction with procedures, which are created outside of the Designer interface to extend PowerCenter/PowerMart functionality. It is useful in creating external transformation applications, such as sorting and aggregation, which require all input rows to be processed before emitting any output rows.

Using Java Transformation

Using Java Transformation

A Java transformation provides a native programming interface to define transformation functionality with the Java programming language. You can use a Java transformation to define simple or moderately complex transformation functionality without advanced knowledge of the Java programming language or without using an external Java development environment.

Types of Java transformation:

· Active: Generates more than one output row for each input row in the transformation.

· Passive: Generates one output row for each input row in the transformation.

Example :

Source Table:

| Col1 | Col2 | Col3 |

| 11 | 12 | 13 |

| 21 | 22 | 23 |

| 31 | 32 | 33 |

Our requirement is to transpose the values using Java Transformation as shown below:

Target Table:

| Col1 | Col2 | Col3 |

| 11 | 21 | 31 |

| 12 | 22 | 32 |

| 13 | 23 | 33 |

Design a mapping as shown below

Create Source and Target definition and connect all the input and output ports as shown above.

Please note that you must select the type as “Active” for Java Transformation.

The ports tab will look like as shown below:

Go to Java Code tab and select Helper Code tab. We will define all the required variables here.

Under On Input Row tab, whenever the input row comes, we will store all the values in the buffer sequentially.

Under On End of Data tab, we will define our logic and generate the output values.

ETL Process

ETL Process

¨ 1. Analyses Business Requirement Documentation – In this process you should understand the business needs by gathering information from the user. You should understand the data needed and if it is available. Resources should be identified for information or help with the process.

¨ Deliverables

§ A logical description of how you will extract, transform, and load the data.

§ Sign-off of the customer(s).

o Standards

§ Document ETL business requirements specification using the ETL Business Requirements Specification Template, your own team-specific business requirements template or system, or Oracle Designer.

o Templates

§ ETL Business Requirements Specification Template

¨ 2.0 Create Physical Design – In this process you should define your inputs and outputs by documenting record layouts. You should also identify and define your location of source and target, file/table sizing information, volume information, and how the data will be transformed.

o Deliverables

§ Input and output record layouts

§ Location of source and target

§ File/table sizing information

§ File/table volume information

§ Documentation on how the data will be transformed, if at all

o Standards

§ Complete ETL Business Requirements Specification using one of the methods documented in the previous steps.

§ Start ETL Mapping Specification

o Templates

§ ETL Business Requirements Specification Template

§ ETL Mapping Specification Template

¨ 3.0 Design Test Plan – Understand what the data combinations are and define what results are expected. Remember to include error checks. Decide how many test cases need to be built. Look at technical risk and include security. Test business requirements.

o Deliverables

§ ETL Test Plan

§ ETL Performance Test Plan

o Standards

§ Document ETL test plan and performance plan using either the standard templates listed below or your own team-specific template(s).

o Templates

§ ETL Test Plan Template

§ ETL Performance Test Plan Template

¨ 4.0 Create ETL Process – Start creating the actual Informatica ETL process. The developer is actually doing some testing in this process.

¨ Deliverables

§ Mapping Specification

§ Mapping

§ Workflow

§ Session

§ Standards

§ Start the ETL Object Migration Form

§ Start Database Object Migration Form (if applicable)

§ Complete ETL Mapping Specification

§ Complete cleanup process for log and bad files – Refer to Standard_ETL_File_Cleanup.doc

§ Follow Informatica Naming Standards

o Templates

§ ETL Object Migration Form

§ ETL Mapping Specification Template

§ Database Object Migration Form (if applicable)

¨ 5.0 Test Process – The developer does the following types of tests: unit, volume, and performance.

o Deliverables

§ ETL Test Plan

§ ETL Performance Test Plan

o Standards

§ Complete ETL Test Plan

§ Complete ETL Performance Test Plan

o Templates

§ ETL Test Plan Template

§ ETL Performance Test Plan

¨ 6.0 Walkthrough ETL Process – Within the walkthrough the following factors should be addressed: Identify common modules (reusable objects), efficiency of the ETL code, the business logic, accuracy, and standardization.

o Deliverables

§ ETL process that has been reviewed

o Standards

§ Conduct ETL Process Walkthrough

o Templates

§ ETL Mapping Walkthrough Checklist Template

¨ 7.0 Coordinate Move to QA – The developer works with the ETL Administrator to organize ETL Process move to QA.

o Deliverables

§ ETL process moved to QA

o Standards

§ Complete ETL Object Migration Form

§ Complete Unix Job Setup Request Form

§ Complete Database Object Migration Form (if applicable)

o Templates

§ ETL Object Migration Form

§ Unix Job Setup Request Form

§ Database Object Migration Form

¨ 8.0 Test Process – At this point, the developer once again tests the process after it has been moved to QA.

o Deliverables

§ Tested ETL process

o Standards

§ Developer validates ETL Test Plan and ETL Performance Test Plan

o Templates

§ ETL Test Plan Template

§ ETL Performance Test Plan Template

¨ 9.0 User Validates Data – The user validates the data and makes sure it satisfies the business requirements.

o Deliverables

§ Validated ETL process

o Standards

§ Validate Business Requirement Specifications with the data

o Templates

§ ETL Business Requirement Specifications Template

¨ 10.0 Coordinate Move to Production - The developer works with the ETL Administrator to organize ETL Process move to Production.

o Deliverables

§ Accurate and efficient ETL process moved to production

o Standards

§ Complete ETL Object Migration Form

§ Complete Unix Job Setup Request Form

§ Complete Database Object Migration Form (if applicable)

o Templates

§ ETL Object Migration Form

§ Unix Job Setup Request Form

§ Database Object Migration Form (if applicable)

¨ 11.0 Maintain ETL Process – There are a couple situations to consider when maintaining an ETL process. There is maintenance when an ETL process breaks and there is maintenance when and ETL process needs updated.

o Deliverables

§ Accurate and efficient ETL process in production

o Standards

§ Updated Business Requirements Specification (if needed)

§ Updated Mapping Specification (if needed)

§ Revised mapping in appropriate folder

§ Updated ETL Object Migration Form

§ Developer checks final results in production

§ All monitoring (finding problems) of the ETL process is the responsibility of the project team

o Templates

§ Business Requirements Specification Template

§ Mapping Specification Template

§ ETL Object Migration Form

§ Unix Job Setup Request Form

§ Database Object Migration Form (if applicable)

SCD's 1/2/3

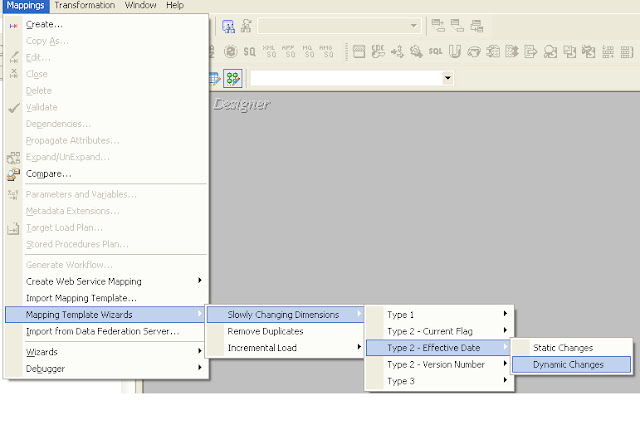

Informatica has developed a feature in Designer tool to generate one of the best SCD's 1/2/3 logic.

In this Wizard,You need to just Provide the Source,it will geenrate a mapping and workflow for you.

Please refer the screen shot for location of this wizard in Designer.

To know more on this,refer INFA HELP.

In this Wizard,You need to just Provide the Source,it will geenrate a mapping and workflow for you.

First post

Hi to blogger and followers..

My intention to start a blog, is for the beginners those are not able to get help when they are in fresher to informatica, and those are not having good facilities to discuss and share their knowledge with others.

Similar to me when i am in early stage of informatica learning.

My intention to start a blog, is for the beginners those are not able to get help when they are in fresher to informatica, and those are not having good facilities to discuss and share their knowledge with others.

Similar to me when i am in early stage of informatica learning.

Subscribe to:

Posts (Atom)